Vision is realized through action, thus the term “active vision” merely emphasizes the importance of perception-action coupling. In fact, most visual animals perform saccadic eye movements, smooth target tracking, and involuntary visual fixation. These visual behaviors have functional implications for visual acuity, motion vision, visual guidance and depth estimation. From the bioengineering standpoint, we are interested in the functions and implementation of visual behaviuors. Flying insects perform a full repertoire of visual behaviuors from target tracking, obstacle negotiation to navigation. We have developed an insect-scale motion capture protocol which can track the 3D kinematics of a dragonfly’s head, body, and wings. This approach allows us to reconstruct the visual gaze, body states, and wing dynamics from different freely behaving insects. Understanding the heuristics and the neural implementation underlying these visual behaviuors can help us develop bio-inspired machine vision systems which incorporate appropriate motor gestures.

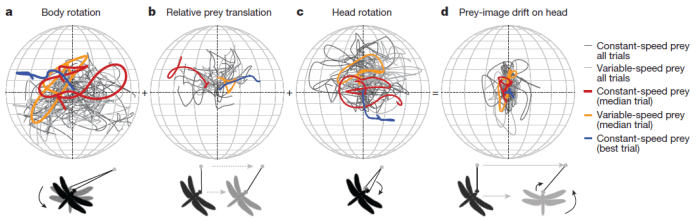

For example, the dragonfly keeps its eyes on the prey during prey interception with a movement signature of a predictive controller. The prey drift can be decomposed into three components: dragonfly body rotation, prey translating relative to the dragonfly, and the dragonfly head rotation.

The dragonfly manages to use the head rotation to compensate for its own body rotation as well as the prey relative translation. While the former requires a accurate forward model of its own body dynamics, the latter calls for an extremely sophisticated prey model. In addition to these internal models, it must also coordinate an efference copy to reconcile the wide-field visual signal (e.g. optic flow) to keep the flight controller coherent. How does a dragonfly learn its own flight dynamics to tune the forward model? What is the nature of the prey model? How do visual orientation and target tracking impact the acquisition of other critical visual information? These are all fascinating questions that we would like to tackle. The answers… indeed will provide implementation solutions to an highly efficient active vision system.