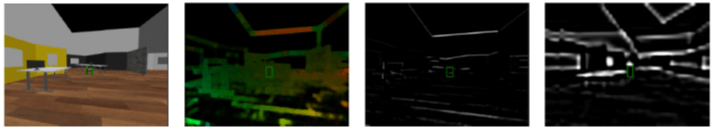

Visual insects move in the world with impressive speed and robustness. Given their small brain and limited time to process visual information, they negotiate the world without explicit understanding of each object. Instead, they have a suite of built-in motion detection mechanisms to extract relevant object motion in the world. This approach is extremely fast and energetically efficient. To further reduce the computational load, many insects develop strategic steering of their eyes to accomplish visual measurements. This form of “active vision” provides several advantages and has not been fully exploited in the bioinspired engineering community. In this project, we integrate two motion detection pathways in insect vision (i.e. wide-field motion & moving target detection) to explore how they can extract information for both static and moving obstacles synergistically with appropriate steering of the visual sensor.